Forum tip: Always check when replies were posted. Technology evolves quickly, so some answers may not be up-to-date anymore.

Comments

-

MSP360 powered by AWSNo, I'm not a MSP. I just deal with my own backups. That might be the cause then, thanks.

-

MSP360 powered by AWSHi David,

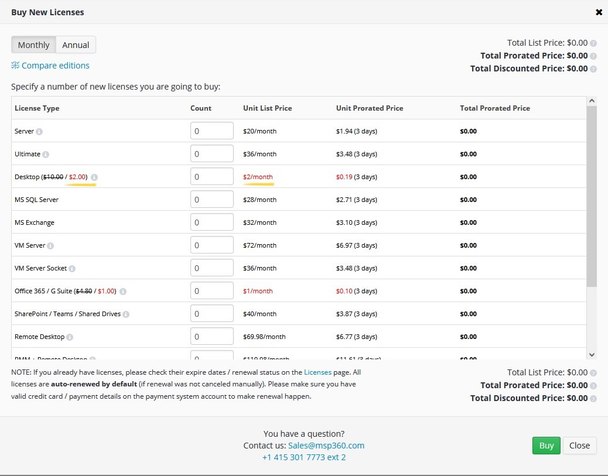

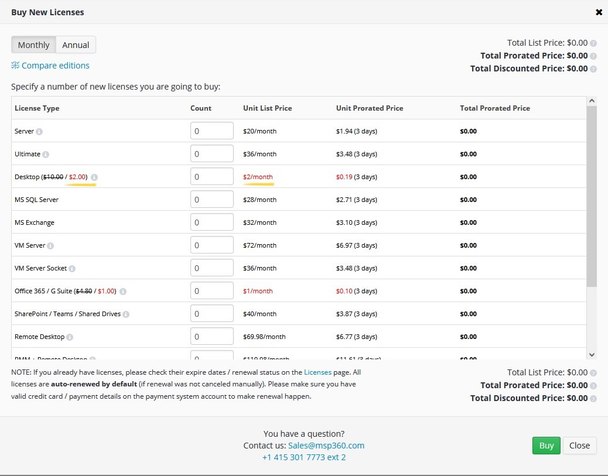

I get the $2 price when I try to buy new licenses through the console -please check the attachment. I only have a "MSP360 (Amazon S3)" storage account. It seems the support ticket has been transferred to Sales on the 22nd, no further updates since.

Thanks,Attachment Untitled

(107K)

Untitled

(107K)

-

Add to Backup Storage files already backed upIt worked!

I changed the type of backup plan from Custom to Simple and then run a Consistency Check and it read all the previously uploaded images! :starstruck:

Thank you David. -

Add to Backup Storage files already backed upI do not have that option enabled. Inside the container there's just a folder containing all the images, should I follow anyway the steps in the link you provided before?

-

Add to Backup Storage files already backed upSorry David, I said "simple mode" when it's "custom mode".

This is the situacion:

I have like 70 Blu-Rays burned with data that I keep as my local archive. These are Double and Triple Layer discs, storing 46 or 92 GB in each case. I want to have an exact copy of them in Azure Storage using Archive tier. I extract the images first, store them temporaly in my PC and then upload that iso file to the cloud. After the image has been copied to Azure, I delete the iso in my PC, and repeat the same steps with the next disc.

I'm currently at a 60% of the whole proccess (~2500 GB) and that took me like 3 weeks. I want to avoid by all means to start the operation from scratch.

So, if it's possible to track the files once they are in the cloud and have no relation with CBB Ultimate, perfect. If not, that's not a big problem since this is a special case, those files are there as a deep archive that maybe won't be recovered never.

When I started the process I only had CBB Desktop, and having the 5TB limit I didn't what to drain the limit with this case (~4TB total). Now with Ultimate, I'm free of that, so I woudn't mind to store the profile in the cloud. Remenber, though, that Azure Archive it's like Glacier, files are not really there, they take up the 15 hours to be available.

I could move the images to "hot" storage, but first I need to know it's possible to track the files in this scenario. -

Add to Backup Storage files already backed up> I think you are asking the following

Yes that's it. I uploaded with CloudBerry Explorer for Azure about 1.5TB of Blu-Ray ISO images (then set archive tier), so they are not "registered" by any CloudBerry Backup Plan.

Yesterday I got CloudBerry Backup Ultimate and, since now I don't have storage restrictions, I would like to manage all those files with CB Backup and the new ones I'll continue uploading. I looked for options, but it seems it's not possible to track the old files.

> unless you use the simple backup option

I'm using that mode -

How to connect to my MS Azure RA-GRS storage account secondary location?Thank you Denis, sounds so good.

-

How to connect to my MS Azure RA-GRS storage account secondary location?It would be enough to have that feature in Explorer and/or Drive. For instance: when a storage account is added with "-secondary" tag, access it only as read only. Drive has this as an option already. I don't see no reason not to be able to access this copy when I can do it with a web browser.

I know MS will eventually switch for primary to secondary on failure, but with RA-GRS I have the right to read that secondary location, with simple GRS, I can´t.

As for use-cases, this is one example: the other day I deleted a bunch of files by error, it would be a nightmare to recover them one by one with Chrome, but if I would have had access with CB Explorer or Drive, I could have accessed those files in order to try to copy them back to primary location (taking advantage of the delay in sincronization). Sadly, as things are now (tho CB products are good for me anyway) I had to reupload all the files again (about 1.5TB took be one week).

I not asking for anything fancy, just access. I hope you understand my point. :halo: -

How to connect to my MS Azure RA-GRS storage account secondary location?Well, the same as intended by Microsoft, getting read access to a secondary location is why I pay extra money for my Azure subscription. Getting read access from CloudBerry software would be perfect, specially when getting many files. :smile:

Is that really difficult to implement? in the browser I only need to add "-secondary"

sanferno

Start FollowingSend a Message

- Terms of Service

- Useful Hints and Tips

- Sign In

- © 2025 MSP360 Forum